Overview

Our OpenAI integration allows you to easily perform AI-powered tasks, such as summarizing text, answering questions, generating images, fine tuning and much more.Installing the OpenAI packages

Authentication

To use the OpenAI API with Trigger.dev, you’ll need an API Key from OpenAI. If you don’t have one yet, you can obtain it from the OpenAI dashboard.Tasks

Once you have set up a OpenAI client, you can add it to your job and start using the provided tasks:Chat Completions

Given a list of messages comprising a conversation, the model will return a response

Assistants (Beta)

Build assistants that can call models and use tools to perform tasks

Files

Upload files to use with assistants and fine-tuning

Images

Given a prompt and/or an input image, the model will generate a new image

Fine Tuning Jobs

Manage fine-tuning jobs to tailor a model to your specific training data

Models

List and describe the various models available in the API

Completions (Legacy)

Given a prompt, the model will return one or more predicted completions.

Usage as Universal Client

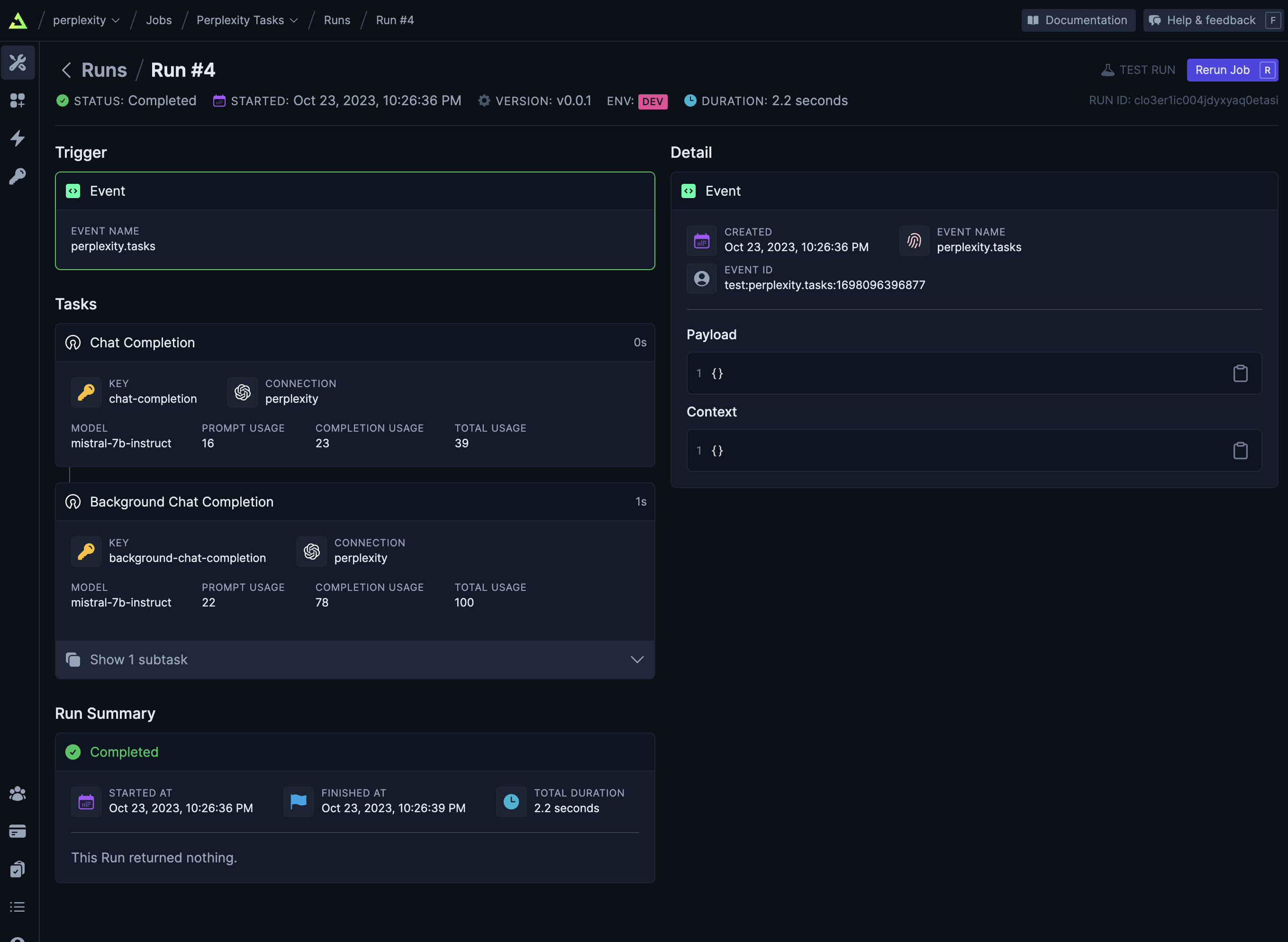

You can use our OpenAI integration as a universal client to interact with other OpenAI-compatible APIs, such as Perplexity.ai

Example jobs

Code examples

Check out pre-built jobs using OpenAI in our API section.